In the article What Is Cloud Phone, a cloud phone is defined as a real Android device — and "real" extends beyond the processor. Physical sensors inside the SoC generate data that platforms use to evaluate device trustworthiness. TikTok checkpoints your account because the gyroscope reports 0,0,0 for 3 straight hours — a clear emulator signal.

Platforms do not just check whether sensors exist — they inspect DATA PATTERNS, consistency between gyroscope, accelerometer, and GPS over time. This article analyzes what sensor data consistency is, 4 sensor types that influence Trust Score, 3 platform inspection methods, and why ARM cloud phones pass sensor checks through physical hardware.

What Is Sensor Data Consistency — Why "Having Sensors" Is Not Enough

Sensor data consistency is the coherence of sensor readings that reflect real-world behavior over time and across sensors. Platforms inspect data patterns — not just sensor presence.

The core difference lies in 3 inspection levels:

Sensor data must exhibit natural variation — having data is insufficient, data must reflect actual physical behavior. Emulators can return gyroscope values, but those values do not vary with temperature, lack manufacturing drift, and do not correlate with accelerometer readings — 3 signals platforms detect.

Distinction: the Device Fingerprint article covers the 7 fingerprint components at a high level → this article deep dives into sensor data patterns — the hidden inspection layer most users overlook.

3 examples of genuine consistency: natural variance (gyroscope fluctuates ±0.02°/s at rest), temperature drift (bias offset shifts 0.1–0.3°/s per 10°C increase), and cross-sensor correlation (GPS movement → accelerometer must report corresponding acceleration).

4 Sensor Data Types That Influence Trust Score

Account Trust Score depends on 4 types of sensor data — each carrying a unique manufacturing fingerprint that software cannot replicate.

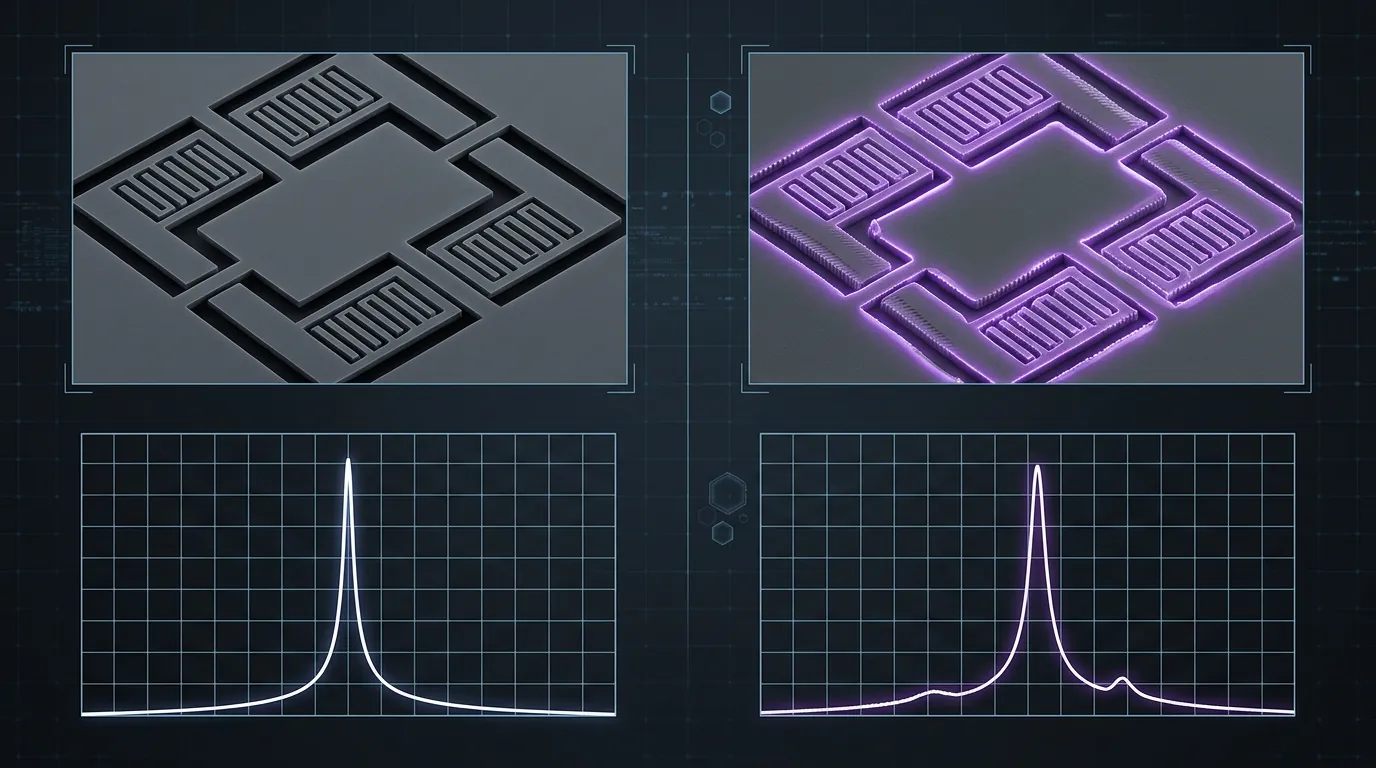

Gyroscope — Each Chip's Manufacturing Fingerprint

MEMS (Micro-Electro-Mechanical Systems) gyroscopes produce unique vibration patterns caused by microscopic manufacturing imperfections. No two MEMS chips are identical — each chip carries a unique resonance frequency and bias offset.

Each MEMS gyroscope's resonance frequency can identify a device with over 96% accuracy, according to NDSS Symposium research. Nanometer-scale imperfections in the silicon structure create fixed, unique bias offsets and noise patterns per chip.

Real gyroscopes exhibit 3 characteristics emulators lack:

- Manufacturing bias: Fixed offset of 0.01–0.05°/s, unique per chip

- Temperature drift: Bias shifts linearly with temperature (0.015°/s per °C)

- Natural noise: Gaussian noise with a fixed variance (~0.003°/s RMS)

Emulators return static values (0,0,0) or repeating sine waves — both lack manufacturing bias and temperature drift → platforms detect them immediately.

Accelerometer — No Two Chips Share the Same Bias Offset

Accelerometer bias offset creates a unique measurement signature — each chip measures gravity with a small but fixed and distinct error.

On a real device, a horizontally placed accelerometer reports: acceleration X = 0.012 m/s², Y = -0.008 m/s², Z = 9.795 m/s² (instead of exactly 9.81). The 0.012 and -0.008 values are bias offsets — fixed, unique, and measurable per chip. These offsets remain stable across reboots and over time, forming a persistent hardware signature. Emulators return "perfect" values (0, 0, 9.81) or randomized data — neither matches a real manufacturing bias.

Cross-correlation between accelerometer and gyroscope also serves as a detection signal: when the device rotates, the gyroscope reports angular velocity → the accelerometer must report corresponding acceleration changes. Emulators that fake each sensor independently → cross-correlation mismatch. The mathematical relationship between angular velocity and linear acceleration follows strict physical laws that scripted values rarely satisfy.

GPS — Location Consistency and Movement Correlation

GPS accuracy variability creates natural noise patterns — varying with environment, surrounding buildings, and satellite signals.

Real devices in urban areas exhibit GPS drift of ±6 meters due to signal reflections from buildings (multipath effect). Indoors, GPS weakens or drops → the device switches to Wi-Fi positioning with ±15 meter accuracy. Driving creates a speed profile that matches accelerometer data.

Emulators exhibit 3 abnormal GPS patterns:

- Fixed coordinates: Unchanging coordinates for hours → red flag

- Teleport: Jumping from New York to Los Angeles in 1 minute → physically impossible

- Perfect accuracy: No drift or multipath effects → too "perfect" to be real

Movement correlation is a critical check: GPS reports 60 km/h travel → accelerometer must report acceleration → gyroscope must report vibration. Missing correlation = fake movement.

Light Sensor and Magnetometer — Supplementary Signals

Light sensor readings change with real ambient lighting conditions — static values for hours = anomalous signal. Magnetometers are affected by nearby electronics, producing a unique signature based on device placement.

Both sensors serve a supplementary role, strengthening cross-signal validation when combined with gyroscope, accelerometer, and GPS data.

How Platforms Inspect Sensor Consistency — 3 Methods

Platforms detect fake sensors through 3 inspection methods — from temporal analysis to machine learning, each exploiting a different weakness of emulated sensors.

Temporal Analysis — Time-Based Pattern Inspection

Temporal analysis collects sensor data over minutes to hours to build a pattern profile. This method exploits a fundamental difference: real sensors exhibit natural variance, fake sensors produce repeating patterns.

On real devices, a resting gyroscope fluctuates ±0.02°/s in a Gaussian pattern with slow temperature drift. On emulators, values are either fixed at 0,0,0 (static) or oscillate in a sine wave with fixed periodicity (periodic). Both static and periodic patterns differ clearly from genuine Gaussian noise — platforms distinguish them through statistical analysis (variance, kurtosis, autocorrelation).

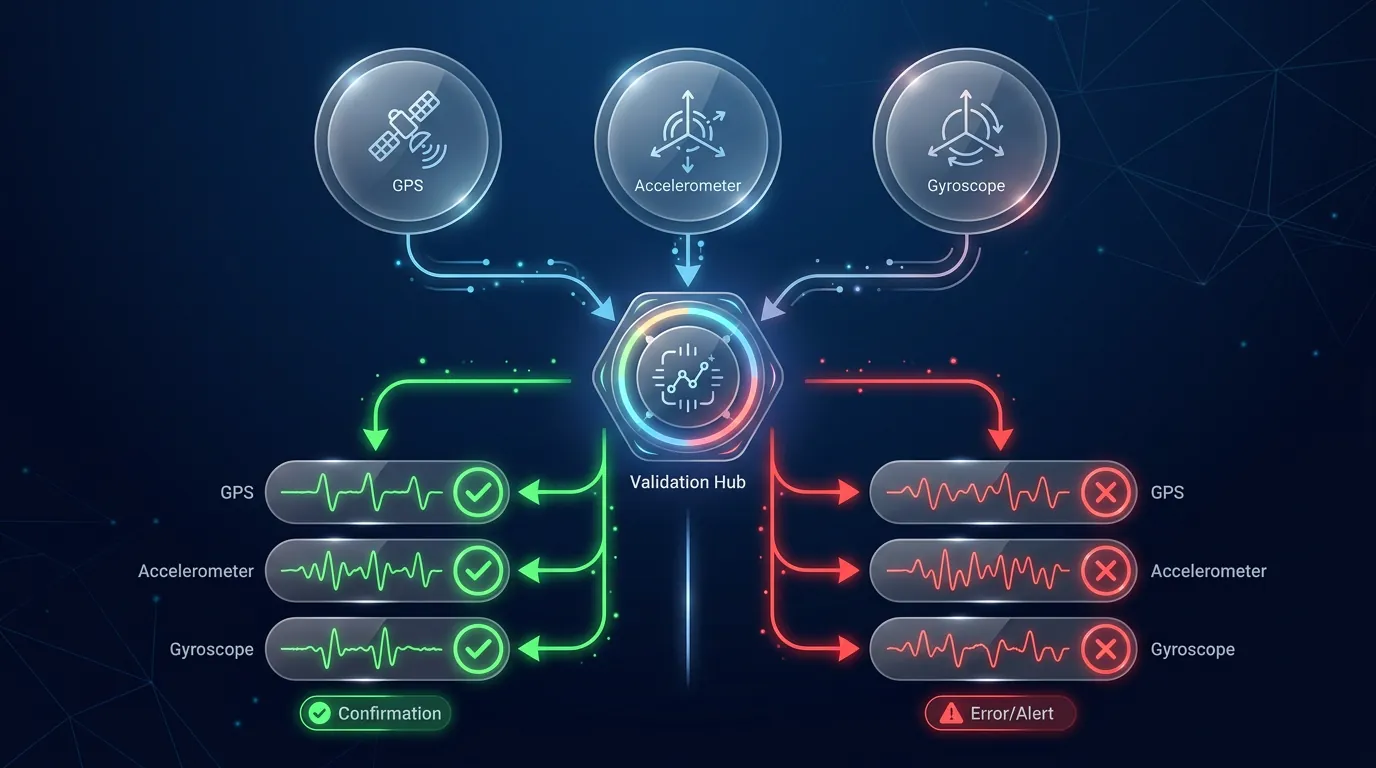

Cross-Signal Validation — GPS × Gyroscope × Accelerometer

Cross-signal validation detects mismatches between GPS, gyroscope, and accelerometer — all 3 sensors must report consistent data about device state.

Inspection process:

- GPS reports movement → accelerometer must report corresponding acceleration

- Accelerometer reports acceleration → gyroscope must report matching angular rotation

- GPS is stationary → accelerometer must report values near 9.81 m/s² (gravity)

Emulators that fake each sensor independently → when cross-validated, 3 sensors report contradictory data → detected immediately. Real devices have 3 sensors integrated on the same SoC, sharing the same physical experience → cross-signal naturally consistent.

Cross-signal validation is effective because it requires emulators to fake 3 sensors simultaneously with precise correlation — a far more complex challenge than faking 1 sensor.

Machine Learning Anomaly Detection

ML models detect fake sensors by learning from millions of genuine device profiles then scoring the probability of authentic vs emulated data. These models operate continuously in the background, analyzing sensor streams in real time rather than performing one-time checks.

ML models trained on 500,000+ device profiles achieve over 97% accuracy in distinguishing genuine from emulated sensor data, according to ExaProtocol research. Input features include: gyroscope time-series data, accelerometer bias patterns, GPS drift characteristics, cross-signal correlation matrices, and temporal consistency scores. Output: a probability score indicating whether the device is genuine (0.0–1.0). Scores below a platform-defined threshold trigger additional verification steps.

Major platforms including Google (Play Integrity), Meta (Facebook), and ByteDance (TikTok) train proprietary ML models on datasets of billions of devices — every emulated device that passes through the system improves the model. This creates an asymmetric advantage: the more emulators try to bypass detection, the better the models become at identifying them.

Trust Score — What Percentage Does Sensor Data Contribute?

Sensor data contributes an estimated 15–25% of the weight in an account's overall Trust Score, varying by platform and activity type.

Trust Score aggregates multiple signal sources:

High sensor consistency → fewer checkpoints, fewer verifications. Inconsistent sensors → Trust Score drops → more frequent checkpoints. Banking platforms set higher sensor thresholds than gaming platforms.

Trust chain connection: native ISA (ISA ARM v8-A vs x86 article) confirms real chip → sensor data consistency (this article) confirms physical behavior → network fingerprint (next article) confirms real network → overall Trust Score.

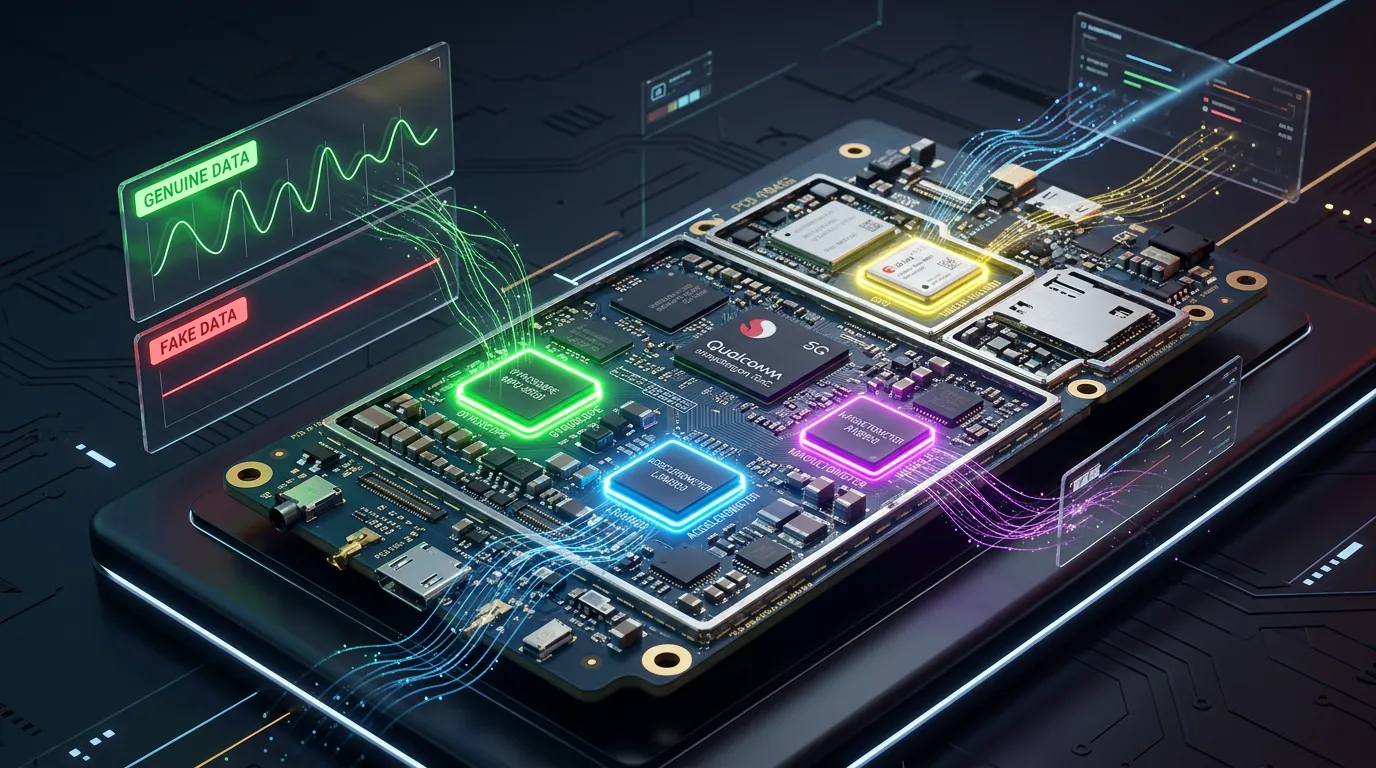

ARM Cloud Phone — Real Sensor Data From Physical SoC

An ARM cloud phone delivers genuine sensor data from a physical SoC — gyroscope and accelerometer integrated in the Exynos 8895 produce authentic manufacturing fingerprints that software cannot replicate.

Comparison across 3 platforms:

XCloudPhone uses the Exynos 8895 — an SoC with a real hardware sensor controller. The MEMS gyroscope on each mainboard carries a unique manufacturing fingerprint, the accelerometer has a distinct bias offset, and the GPS receiver is genuine. Sensor data from physical hardware passes all 3 inspection methods: temporal analysis (natural variance), cross-signal validation (sensors correlate), and ML detection (matches genuine profile).

Cost is ~$10/device on a pay-as-you-go model, significantly reducing the risk of checkpoints and account restrictions compared to traditional emulators. For phone farm operators managing multiple accounts, a real cloud phone with genuine sensor data provides an undetectable hardware profile that software simulation cannot match.

👉 Experience a real cloud phone with genuine sensor data: app.xcloudphone.com

FAQ — Sensor Data and Trust Score

"Can emulators fake sensor data?"

Yes, but fake data produces static or periodic patterns — platforms detect it through temporal analysis and cross-signal validation. Manufacturing bias, temperature drift, and cross-sensor correlation are 3 factors software cannot replicate accurately.

"Does an ARM cloud phone have sensors identical to a handheld phone?"

Yes — the SoC integrates a real hardware sensor controller, creating a unique manufacturing fingerprint per mainboard. MEMS gyroscope, accelerometer, and GPS receiver on an ARM cloud phone function identically to a handheld device.

"Does Trust Score affect Facebook/TikTok checkpoints?"

It directly affects them — sensor data consistency is one component in trust evaluation. High Trust Score → fewer checkpoints. Low Trust Score (static sensors, cross-signal mismatch) → more frequent checkpoints.

"How important is GPS consistency for account farming?"

Critically important — GPS teleportation or prolonged fixed locations = immediate red flag. Platforms inspect GPS drift patterns, movement correlation with accelerometer data, and location plausibility over time.

From Sensor Data to Account Protection Strategy

Sensor data consistency is not just about "having sensors" — it is about natural DATA PATTERNS, unique manufacturing fingerprints, and cross-signal correlation between gyroscope, accelerometer, and GPS over time. This is the hidden inspection layer that emulators cannot defeat.

An ARM cloud phone with physical sensors produces genuine manufacturing fingerprints, passing all 3 platform inspection methods. Combined with native ISA (ISA ARM v8-A vs x86 article) and real network identity (Network Fingerprinting article), sensor data completes the Trust Score picture every account needs.

Learn more about what a cloud phone is in the overview article What Is Cloud Phone, or explore how device fingerprinting works in the Device Fingerprint article.

👉 Start with a real ARM cloud phone: app.xcloudphone.com